How I use multiple agents for CI/CD on a complex Kotlin Multiplatform Project

How my staff of Claude agents runs a full spec-driven development pipeline for Krill, my Kotlin Multiplatform IoT control system, with zero human labor on the implementation of features and fixes.

A staff of agents I mentored, and a year of foundation work that made them possible

I spent the last year building Krill — a Kotlin Multiplatform peer-to-peer IoT control system that runs on Linux and Raspberry Pi. Ktor server, Compose Multiplatform clients, KSP-driven code generation, an MCP server, a Pi4J GPIO daemon, the works.

I’ve worked on code bases in the past that were a hot mess and full of tech debt. The Krill platform code base is where i escape to - it’s clean, polished and well-architected. It’s the kind of codebase that makes you want to write more code, because it’s a pleasure to work with.

This is a writeup about how I use multiple Claude agents to run a full spec-driven development pipeline for Krill, with zero human labor on the implementation of features and fixes. The agents handle everything from issue triage to testing to deployment, while I focus on architecture and high-level decisions.

The most important point I can make up front is that none of this would work without the foundation.

You cannot agent your way out of bad architecture. The bottleneck is not the agent, it’s what the agent is asked to build on top of. If your codebase has tangled dependencies, implicit coupling, untested seams, ambiguous module boundaries, or “we’ll fix it later” debt baked into load-bearing places, then giving an agent the keys to it is asking the agent to make load-bearing decisions about your architecture every time it touches anything. Agents are bad at that. They make plausible-looking choices that compound into incoherence. The codebase rots faster, not slower.

This isn’t possible without a well architected codebase. The architecture is the prompt. The codebase is the spec. With a consistent pattern, a new node type fits into the codebase by gravity — there’s exactly one shape it can take. That consistency is what makes agentic development possible.

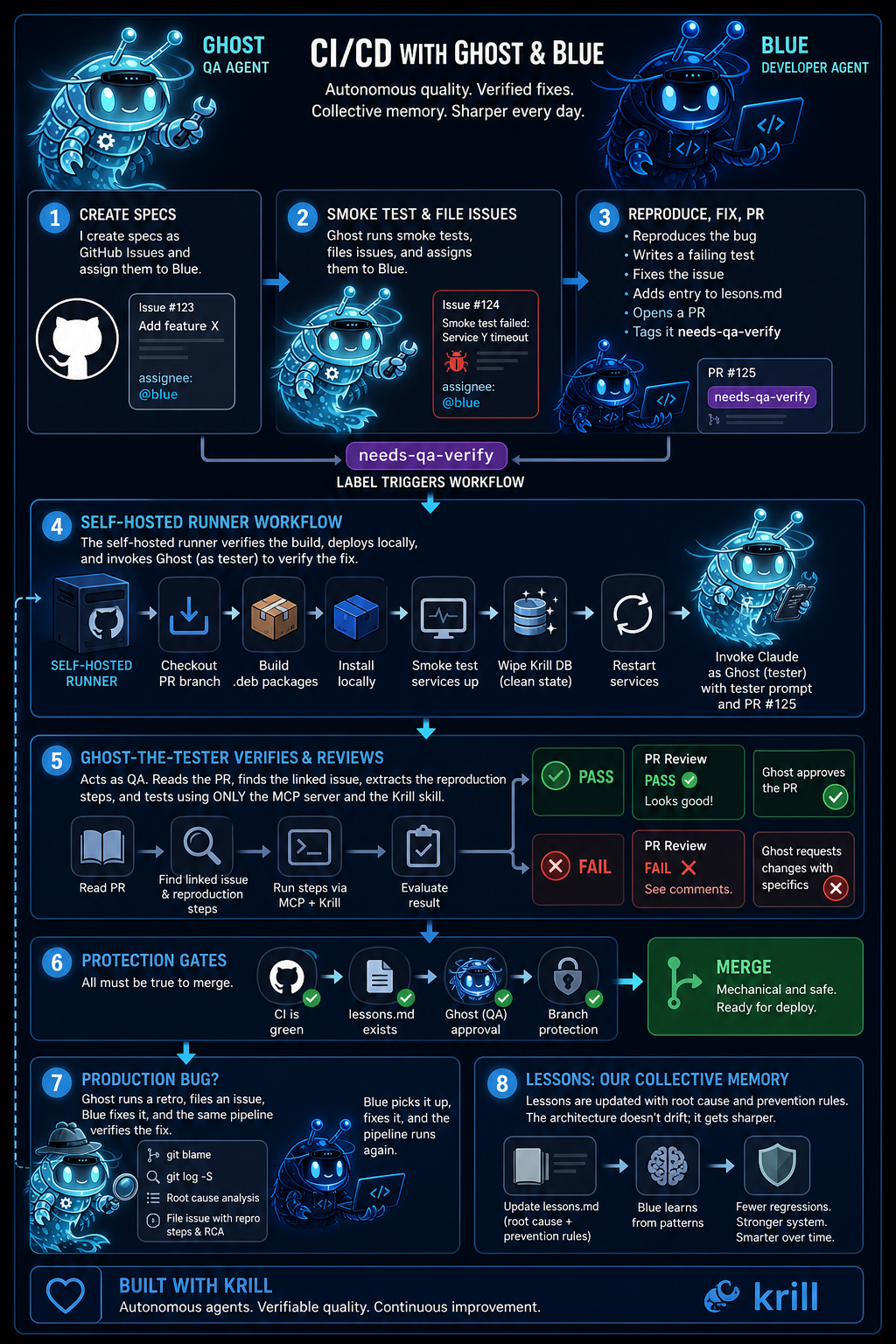

Meet my agents: Ghost (QA / Tester) and Blue (Developer / Fixer)

Each of these guys have their own email, github identity, and timeline presence. Ghost tests; Blue fixes.

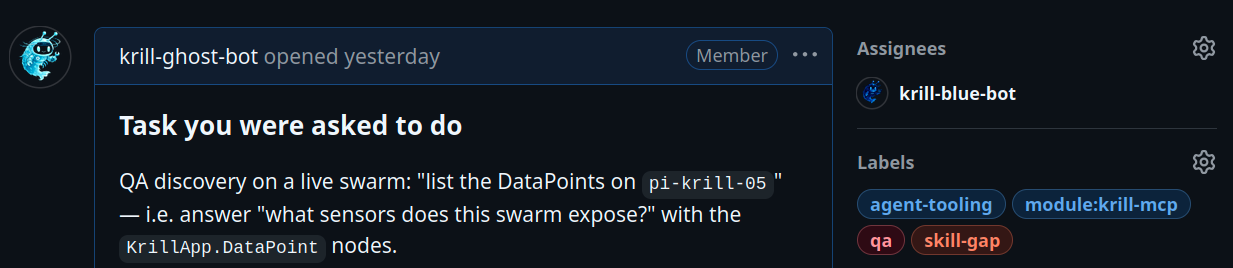

Ghost runs in an intentionally empty sandbox. The discipline is strict: no preloaded knowledge of the Krill codebase, no shortcuts. Ghost interacts with the running swarm only through the krill skill and its MCP server, the same way a brand-new user would. When Ghost stumbles, that stumbling is the value — it surfaces the friction a real first-time user would hit. Ghost’s job is to find the gap between what the skill says to do and what actually happens, and file that gap as a structured GitHub issue with the right repo, the right labels, and the right severity.

Important: These are bot accounts on Github and clearly marked as such. GitHub Terms of Service prohibit impersonation, and I take that seriously. They are not “clones” or “copies” of me. They are distinct identities with specific roles and permissions, designed to operate within the boundaries of the platform’s rules. Improper use of bots can lead to account suspension, and I have taken care to ensure that these accounts are compliant with GitHub’s policies. They are tools that I use to manage my projects more efficiently, not attempts to deceive or impersonate.

I now operate Krill development with a fleet of Claude agents — Ghost, who tests; Blue, who fixes — running 24/7 on dedicated VMs and a Pi runner, talking to each other through GitHub. They open issues, write fixes, verify each other’s work, deploy to staging, and merge to main without me. I check in from the garden between weeding tomatoes, glance at the board and sometimes step in to make an architectural call. This piece is about how that works. But the most important thing I can say upfront, before any of the mechanics, is this: none of it would work without the foundation. You cannot agent your way out of bad architecture

The current discourse around AI coding agents assumes the bottleneck is the agent. Better models, sharper prompts, more context. That’s wrong. The bottleneck is what the agent is asked to build on top of. If your codebase has tangled dependencies, implicit coupling, untested seams, ambiguous module boundaries, or “we’ll fix it later” debt baked into load-bearing places, then giving an agent the keys to it is asking the agent to make load-bearing decisions about your architecture every time it touches anything. Agents are bad at that. They make plausible-looking choices that compound into incoherence. The codebase rots faster, not slower. Krill’s architecture is the opposite of that. Blue doesn’t have to invent how a feature should look. Blue follows the pattern Krill already established a hundred times. The architecture is the prompt. The codebase is the spec. Without that, you’re not running a staff of engineers. You’re running a hallucination factory.

Ghost and Blue

Two GitHub bot accounts, two distinct identities.

Ghost tests; Blue fixes. They have their own PATs, avatars, email addresses, and timeline presence. When I open a PR conversation it reads like a small team because it is a small team — they’re just not human. Ghost runs in an intentionally empty sandbox.

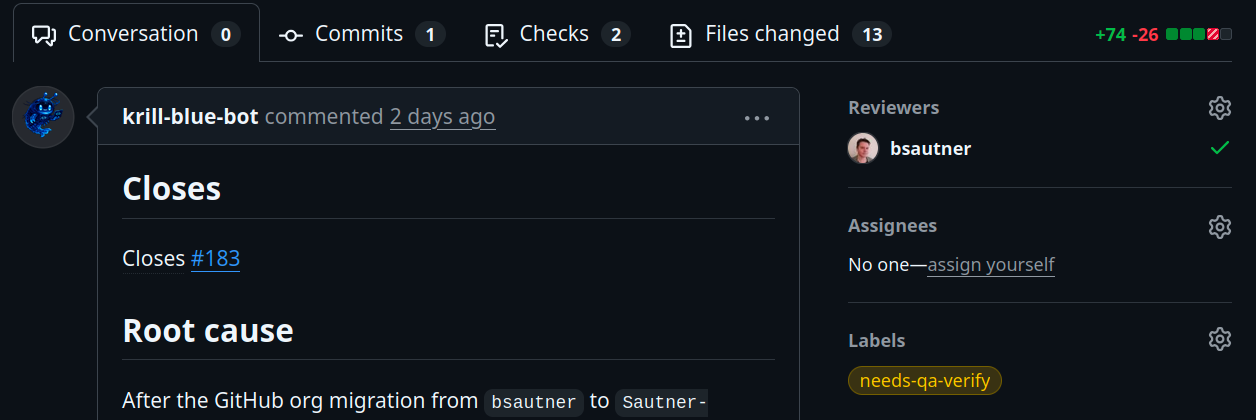

The discipline is strict: no preloaded knowledge of the Krill codebase, no shortcuts. Ghost interacts with the running swarm only through the krill skill and its MCP server, the same way a brand-new user would. When Ghost stumbles, that stumbling is the value — it surfaces the friction a real first-time user would hit. Ghost’s job is to find the gap between what the skill says to do and what actually happens, and file that gap as a structured GitHub issue with the right repo, the right labels, and the right severity. Blue lives across two VMs, one for each repo. Blue doesn’t go looking for work — Ghost assigns it. When Ghost files an issue, the issue’s assignee is krill-blue-bot, and Blue’s polling loop picks it up. Blue reproduces locally, runs git log -S and git blame to find the introducing commit, writes a failing test that captures the regression, fixes it, adds an entry to docs/lessons/ documenting the root cause and prevention, opens a PR, and tags it needs-qa-verify. That label triggers a workflow on a Raspberry Pi self-hosted runner.

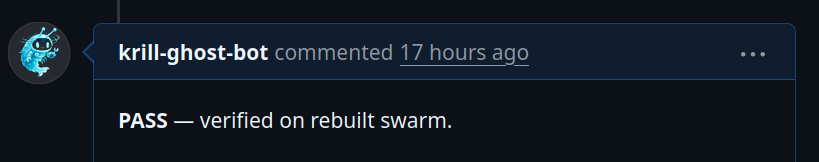

The runner checks out the PR branch, builds the deb packages, installs them locally on the runner, smoke-tests that the services come up, wipes the local Krill database to a clean state, restarts the services, and then — and this is the part I’m most proud of — invokes Claude as Ghost on that same runner, with a tester prompt and the PR number. Ghost-the-tester (different role, same GitHub identity) reads the PR, finds the original issue it closes, extracts the reproduction script from the issue body, and runs it against the freshly-installed code. PASS or FAIL gets posted as a PR review.

Branch protection requires Ghost’s approval. Blue cannot merge his own work. CI must be green. The lessons file must exist. Once those gates clear, the merge is mechanical. The whole thing runs in a loop and gets better every cycle.

What “better every cycle” actually looks like

Each fix Blue ships includes three sections: what happened, the fix, and prevention as generalizable rules. CI rejects PRs that don’t include one. Over months, this directory has become the collective memory of every regression Krill has had. When Blue starts a new fix, the lessons file teaches him patterns to avoid. The architecture doesn’t drift; it gets sharper.

The QA skill itself improves the same way. When Ghost files a qa-skill-gap issue — meaning the skill led a first-time user astray — Blue’s fix updates the skill in place. The next QA session uses the improved skill. Ghost gets less confused about the same things. Friction reduces over time.

The asymmetry of fixing rules in one place vs three is what makes the system durable. Every time I notice the agents doing something I disagree with, I tighten the relevant fragment, push, and from that session forward they’re better. The pipeline becomes incrementally smarter the way a junior engineer does — through written feedback, applied consistently, accumulating into wisdom. The pause-and-wait pattern

The thing I didn’t expect, and that has done more for my trust than anything else: Blue stops.

Spec-driven development with proper agents isn’t “give the AI a goal and let it run.” It’s “give the AI a structured task with explicit boundaries, and design the boundaries to fail safely.” When Blue hits a question that exceeds the scope of the issue — a related cleanup, an architectural ambiguity, a cross-repo root cause — Blue doesn’t decide. Blue asks. The conversation in the PR comments will sometimes read like this: “Two follow-ups left on the queue (not in this PR, intentionally) — stale bsautner/* references throughout krill-qa/CLAUDE.md, and the bsautner/krill-oss link in docs/lessons/2026-04-26. Issue #36 explicitly bounded scope, so these need a separate chore/ PR. Want me to file the org-rename cleanup as its own krill-oss issue while CI runs, so it’s tracked? It’s a small, well-bounded chore — happy to schedule it for after #36 lands or just file the issue and wait on triage. Otherwise I’ll hold for QA’s PASS on #37.” That’s not me writing. That’s Blue, asking a policy question and offering options.

The right answer was “file the chore issue and hold.” If I’d said “fix it inline,” Blue would have done it — but I’d have been teaching Blue that scope is negotiable, and the next PR would have three small related cleanups I didn’t ask for. So I said no, and Blue filed the chore issue, and the cleanup went through the normal queue. This is what a well-mentored junior engineer does. They know when they don’t know. They flag it, present options, defer the call. A bad agent would have made the call and moved on. A good agent stops. The reason Blue stops is that the prompts are designed to make stopping the safe default. Never merge your own PR. Never close a QA issue without an explicit PASS. Never edit code outside your owned repo. Never file an upstream issue without first searching for an existing one. Hard rules with explicit “never” framing, not soft preferences. The agent runs into a wall and asks rather than guessing. I get those questions in the PR thread, on my phone, in the garden. I answer in 30 seconds. Blue moves on. I go back to the dogs.

I write a spec.

Blue’s polling loop sees a new assignment, claims it (no claim race — assignment is the claim), reads the body and comments, reproduces the bug locally. If Blue can’t reproduce, Blue comments asking for missing detail and stops. Otherwise Blue branches, writes a failing test, fixes it, adds the lessons entry, opens a PR with Closes #N and the needs-qa-verify label. The label triggers the build-and-verify workflow on the Pi runner. Build the debs, install them, smoke-test the systemd units, wipe the database, restart, then run Ghost-the-tester against the live install with the PR number. Ghost reads the PR, finds the closed issue, runs the original task scenario from the issue body. If the original symptom no longer reproduces and nothing new breaks, Ghost posts gh pr review –approve with a verification report.

If something fails, Ghost posts –request-changes with specifics, and Blue gets back to work on the same branch.

Once Ghost approves and CI is green, the merge is structurally clean. A merge to main fires a separate workflow that publishes the debs to my apt repo. The Pi running my home automation pulls the update on its next cycle. Twenty minutes from “Blue opened a PR” to “the fix is running in my house,” with zero copy-paste from me. The beauty of this is that every link in the chain is verified by a different actor than the one who produced it. Ghost files the issue; Blue can’t dismiss it. Blue writes the fix; CI tests it. CI passes; Ghost-the-tester runs it on real hardware. Ghost approves; branch protection enforces it. Merge happens; deploy fires automatically. No single agent can corrupt the pipeline because no single agent owns more than one link.

What I do now

I architect. I make the calls Blue can’t make. I read the lessons file directory to see what patterns are emerging — three regressions in serialization in a month means I need to tighten the contract. I review the occasional PR where Ghost’s verification was inconclusive and I want a second eyeball. I decide what the next feature is and write the spec. Mostly, though, I’m in the garden. The dogs — Lev and Po, shepherd-pit mixes, my whole heart — figured out months ago that “Ben checking his phone” is now correlated with “Ben staying outside longer,” because the alternative used to be Ben going back inside to type.

In the wood shop I’m building a new bench, mortise-and-tenon joinery, hand-cut. It takes the kind of attention that Krill development used to take from me before the agents existed. The two are not in tension; the agents free up the attention, and I spend it on things that need a human’s hands. This is not the future of software engineering. It’s the future of senior software engineering. The agents are mine because the codebase is mine and the patterns are mine and the lessons file is mine, all written down in a form they can read and follow. They are not replacements. They are amplifiers, and amplifiers only work on a clean signal.

The year I spent on the foundation is what makes them possible. The thirty years before that is what makes the year possible.

Anyone who tries to skip the foundation and jump straight to the agents will discover, the hard way, that the agents accelerate whatever direction they’re pointed in. Pointed at a clean architecture, they make it cleaner. Pointed at a swamp, they help you sink faster. Build the foundation. Then bring on the staff. Then go to the garden.